A brief context

The balance of power, or walking the fine line between energy supply and energy demand, is one vital point a power utility has to address. The process is relatively straightforward: utilities distribute energy and consumers (households, companies) consume it. Everything would be fine if not for the numerous cases when demand exceeds supply. And yes, this can happen even when co-generation, and I might add variable, sources exist as the stored/available energy may not be enough to fill in the gap in the supply. When this happens blackouts that can leave in darkness or without heating/cooling entire neighborhoods occur. This usually happens on hot summer or cold winter days when people consume extra energy to heat or cool their homes or offices. For instance, in summer consumption spikes usually happen in the afternoon. For the sake of clarity, I must mention that the reverse is also undesirable. Too much excess supply leads to extra costs on the utility side which can be in the long run reflected in higher electricity prices. Buying unused energy costs a lot of money.

The road to AI …

It is therefore in the best interest of utilities to maintain the balance between supply and demand. It is at this point that artificial intelligence and specifically its subset of machine learning algorithms comes into the picture. Machine learning algorithms do a relatively good job in predicting future behavior based on past recorded data and therefore can provide an insight into the probable future energy demand. In doing so they rely on historical information comprising not only of consumed energy but other extrinsic factors which influence it: weather and human behavior (for instance timetables and personal habits) to name but a few.

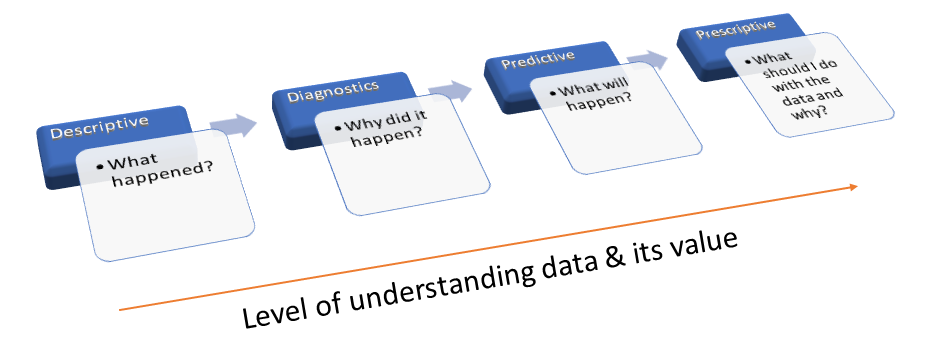

Despite its increased popularity alongside Big Data, machine learning is not a new science and has been around since the 1950s. Its potential for Data Science especially in answering questions such as what will happen? or more importantly, what should I do with data and why? made it a rising star. To answer each of these questions you require different machine learning and AI techniques.

Its use in the energy sector has gained momentum in the past few years as seen in the graphic published by Scheidt et al. in a 2020 paper in the Energy and AI journal which shows the steep curve of the number of papers addressing data analytics in the electricity sector.

… goes through the smart grid

To get to the point of predicting demand and performing data analytics utilities first need to automate the entire process of collecting, communicating, storing, monitoring, and analyzing the energy consumption and its related data securely and efficiently. This is the essence of the smart grid and at its center lies the smart meter, a device capable of collecting, storing, and communicating energy consumption. Automating the entire workflow also has the advantage of enabling remote control of various devices such as appliances, but I will talk about this in a different post. You will find a lot of definitions for the smart grid around the Internet but all of them revolve around the same idea I mentioned here.

The weakest link

As you can recall a chain is as strong as its weakest link and in smart grids, we have several links to which we must pay attention. I will only discuss here a few:

- Communication: this may seem like a trivial task as we all live in a digital world swamped with mobile devices streaming all kinds of data across the Internet. A smart meter has a simple job: read the consumption and send it to the utility via a transmission environment such as WiFi, radio, optical fiber. Ideally, this should be accomplished in real-time for purposes that I will discuss in another post. Depending on the underlying technology real-time communication can be achieved. Let’s take two different examples. In the US, PG&E relies on radio frequencies (LA DWP took a similar approach back in 2013-2015 for its SGRDP project) to transmit data 4 times a day whereas, in Norway, the smart grid relies on a Zigbee gateway to report real-time measurements. Depending on its choice a utility is limited on its real-time decisions.

- Data storage: collecting and integrating all data requires suitable storage. The data coming from smart meters is not clean, contains errors, has gaps, duplicates, or outliers, all having to be dealt with before being inserted in the database. And to paraphrase a well-known saying, not all errors are in fact errors as I will show in a future post.

- Data analysis: while many may be familiar with Excel to perform some level of data analysis, more advanced tools are needed many requiring a minimal set of programming skills (including R and Python). This analysis requires powerful machine learning algorithms that are accurate and fast enough to enable timely decisions. There are cases when events happen unexpectedly and require quick reaction times. Without a fast communication medium and accurate analysis, people could be left without power for a long period of time.

- Data security: remember that smart grids are about collecting personal data from smart meters and also about controlling your appliances. As a result, data must not only anonymized but access to the network must be secure. A paper written back in 2012 showed that by looking at nonencrypted smart grid data one could identify even the type of TV program you are watching as each has a unique power fingerprint. For a general public article see here.

There are others but I will leave it to you to add your thoughts in the comments below.

So is it worth it?

The short answer: Yes. I will not enter here in a debate about the impact of radio waves. The reality is that we live in an interconnected world where we are surrounded by radio signals and everything is increasingly automated and hopefully getting smarter. Smart grids are a requirement if we are to live better, greener, and cheaper and are also part of the envisioned smart city and possibly smart world.